TL;DR: AI vishing is automated voice phishing that uses cloned voices to impersonate executives over the phone. Attackers need as little as 10 seconds of publicly available audio to create a convincing voice replica. The AI bot then calls employees with tailored social engineering scenarios, adapting in real time to responses. Traditional security awareness training does not test for voice-based attacks. Callstrike's AI vishing simulation platform lets enterprises run realistic voice phishing campaigns against their own teams, measuring who shares credentials, who follows verification procedures, and who needs targeted training.

A phone call your team never trained for

In 2019, the CEO of a UK-based energy company received a phone call from his boss, the chief executive of the firm's German parent company. He recognised the voice immediately. The slight German accent, the cadence, the tone. The request was urgent. Wire €220,000 to a Hungarian supplier within the hour to avoid a late payment penalty. He complied.

Three calls were made in total. The first secured the transfer. The second claimed a reimbursement was on its way. The third requested another payment, but by then the CEO had grown suspicious. The reimbursement never arrived. The money had already been routed from Hungary to Mexico and dispersed. The attackers were never caught.

The voice on the phone was an AI-generated clone. Euler Hermes, the insurer that covered the loss, confirmed it was the first known case of AI voice cloning used in financial fraud. The Wall Street Journal broke the story later that year.

That was 2019. Since then, a branch manager in Hong Kong was deceived by a cloned voice into wiring $35 million to accounts across multiple countries. In 2024, a Ferrari executive received a call from someone who sounded exactly like CEO Benedetto Vigna, complete with his southern Italian accent, discussing a confidential acquisition. The attack only failed because the executive asked a personal question the AI couldn't answer. "What was the title of the book you recommended to me last week?" The line went dead.

Voice phishing, known as vishing, has existed for decades. What's changed is the technology behind it. AI voice cloning tools can now replicate any person's voice from a short sample of publicly available audio. A conference keynote, a podcast interview, a YouTube appearance. That's enough to build a synthetic voice that sounds indistinguishable from the real person over a phone line.

The result is a new category of attack that bypasses everything your security awareness programme is designed to catch. No links to click. No attachments to scan. No email headers to inspect. Just a phone call that sounds exactly like someone your employee trusts.

This post breaks down how AI vishing attacks work, what makes them effective, and how enterprises can test their teams against them before a real attacker does.

How attackers build an AI vishing attack in under an hour

The attack chain for AI vishing is shorter than most security teams assume. It has four steps, and none of them require advanced technical skills.

Step 1. Harvest voice samples. The attacker finds audio of the target executive. LinkedIn videos, earnings calls, conference talks, podcast appearances, and media interviews are all publicly accessible. Ten seconds of clean audio is enough for most modern voice cloning models. Thirty seconds produces near-perfect results.

Step 2. Clone the voice. The audio sample is fed into a voice cloning platform. Several commercial and open-source tools can generate a usable voice replica in minutes. The output is a model that can speak any text in the cloned voice, with natural intonation and cadence.

Step 3. Build the scenario. The attacker crafts a social engineering script. Common pretexts include urgent payment authorisation, IT credential verification, vendor onboarding confirmation, and compliance audit requests. The scenario is tailored to the target employee's role. A finance manager gets a payment request. An IT admin gets a password reset demand.

Step 4. Make the call. The attacker uses a VOIP service with caller ID spoofing to display the executive's real phone number. The AI voice delivers the script, adapting to the employee's responses in real time. The entire interaction feels like a normal phone call with a trusted colleague.

Total setup time from voice sample to live call is under 60 minutes. Total cost is under $20.

Why employees fail vishing tests at twice the rate of email phishing

Email phishing simulation platforms report average click rates between 10% and 30% across industries. Vishing attacks consistently produce failure rates above 50%. Some enterprises see rates above 70% on their first voice phishing test.

Three factors explain the gap.

Employees have no training baseline for phone attacks. Every security awareness programme includes email phishing modules. Almost none include voice phishing. Employees have been trained to scrutinise emails for suspicious links, spoofed domains, and unusual sender addresses. They have received zero training on how to handle a suspicious phone call from an executive.

Phone calls carry implicit authority. When someone calls and sounds like the CEO, the employee's default response is compliance. The social dynamics of a phone conversation are fundamentally different from email. There's time pressure, there's a human voice creating emotional connection, and there's no "report phishing" button to click.

Verification procedures don't exist for voice channels. Most organisations have documented procedures for verifying unusual email requests. Almost none have equivalent procedures for voice requests. When the "CFO" calls and asks for an urgent wire transfer, what is the employee supposed to do? Hang up and call back on a verified number? Most employees don't know, because nobody has told them.

What an AI vishing simulation actually looks like

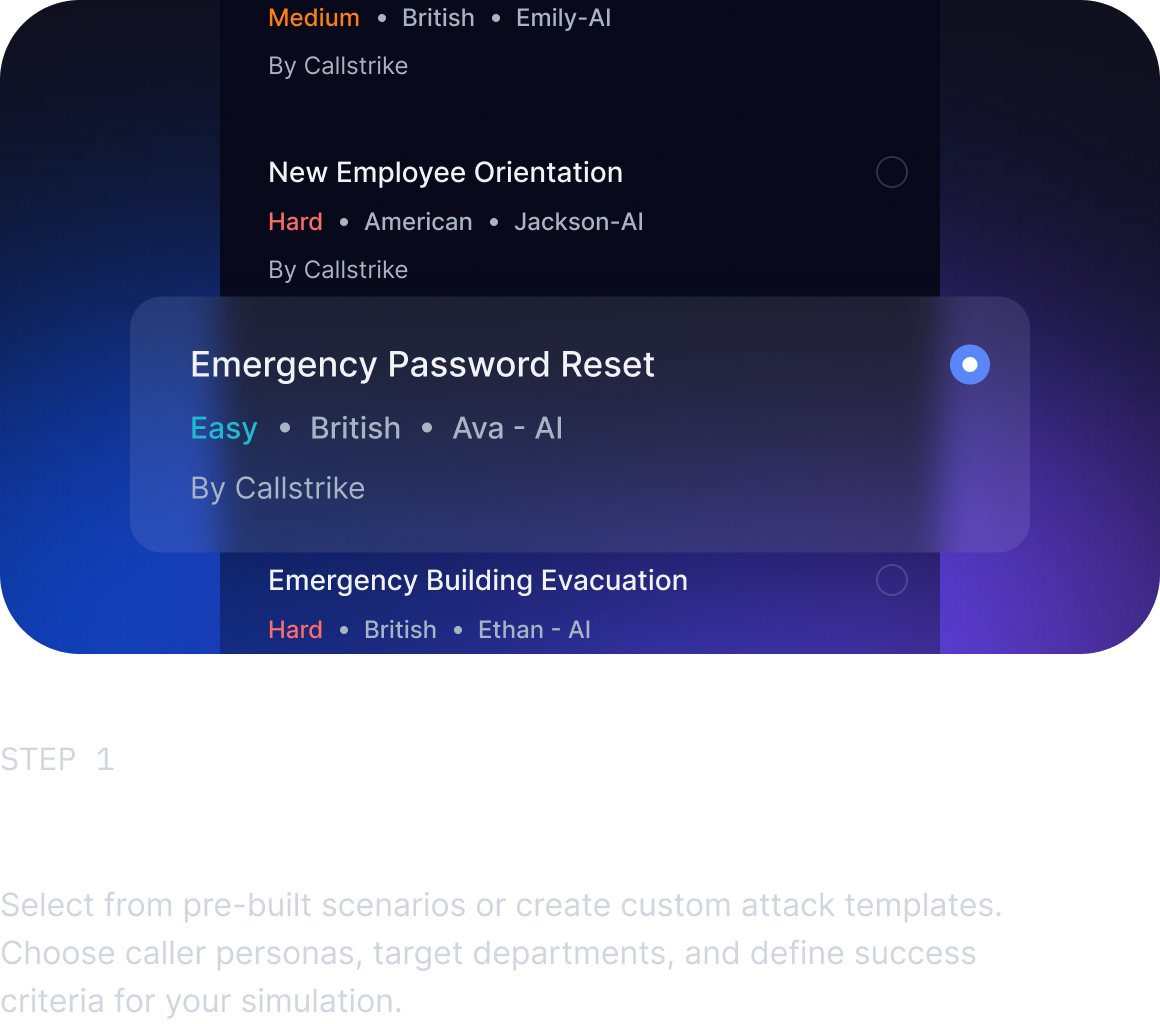

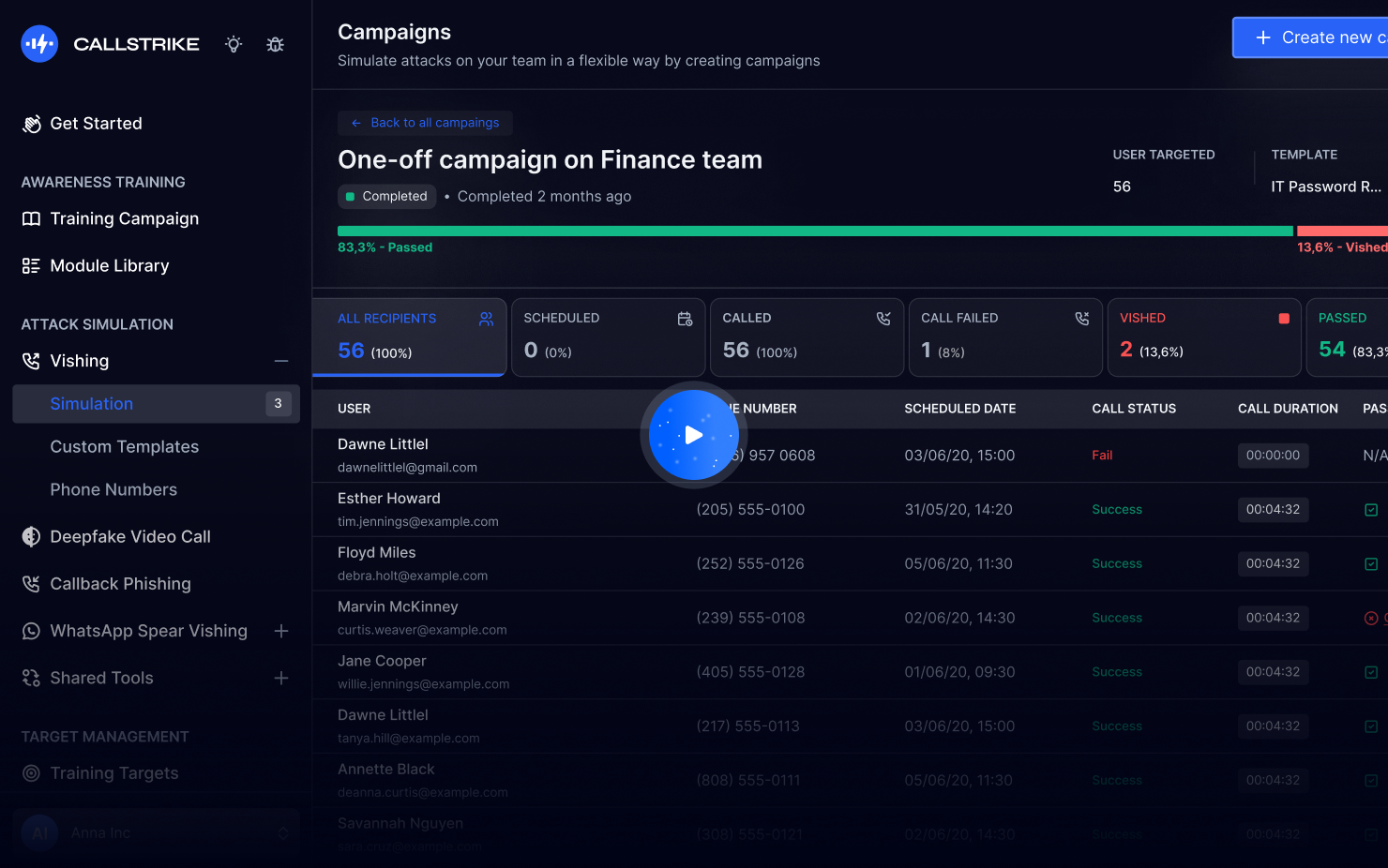

Callstrike's AI vishing simulation platform lets security teams run automated voice phishing campaigns against their own employees. Here's what the process looks like from setup to results.

Campaign configuration. The security team selects a simulation template or builds a custom scenario. Templates cover the most common attack pretexts. Password reset calls from IT support. Payment authorisation from the CFO. Vendor verification from procurement. Each template defines the conversational flow, the social engineering triggers, and the success criteria (did the employee share a credential, approve a transfer, or provide sensitive information).

Voice selection. The team chooses an AI voice for the campaign. This can be a generic professional voice or a cloned voice of a specific executive. Voice cloning requires a short audio sample uploaded through the platform. The clone is generated in minutes and used only within the scoped simulation.

Target selection. Employees are selected individually, by department, or by role. The platform integrates with identity providers (Okta, Azure AD, Google Workspace) so teams don't need to maintain separate user lists.

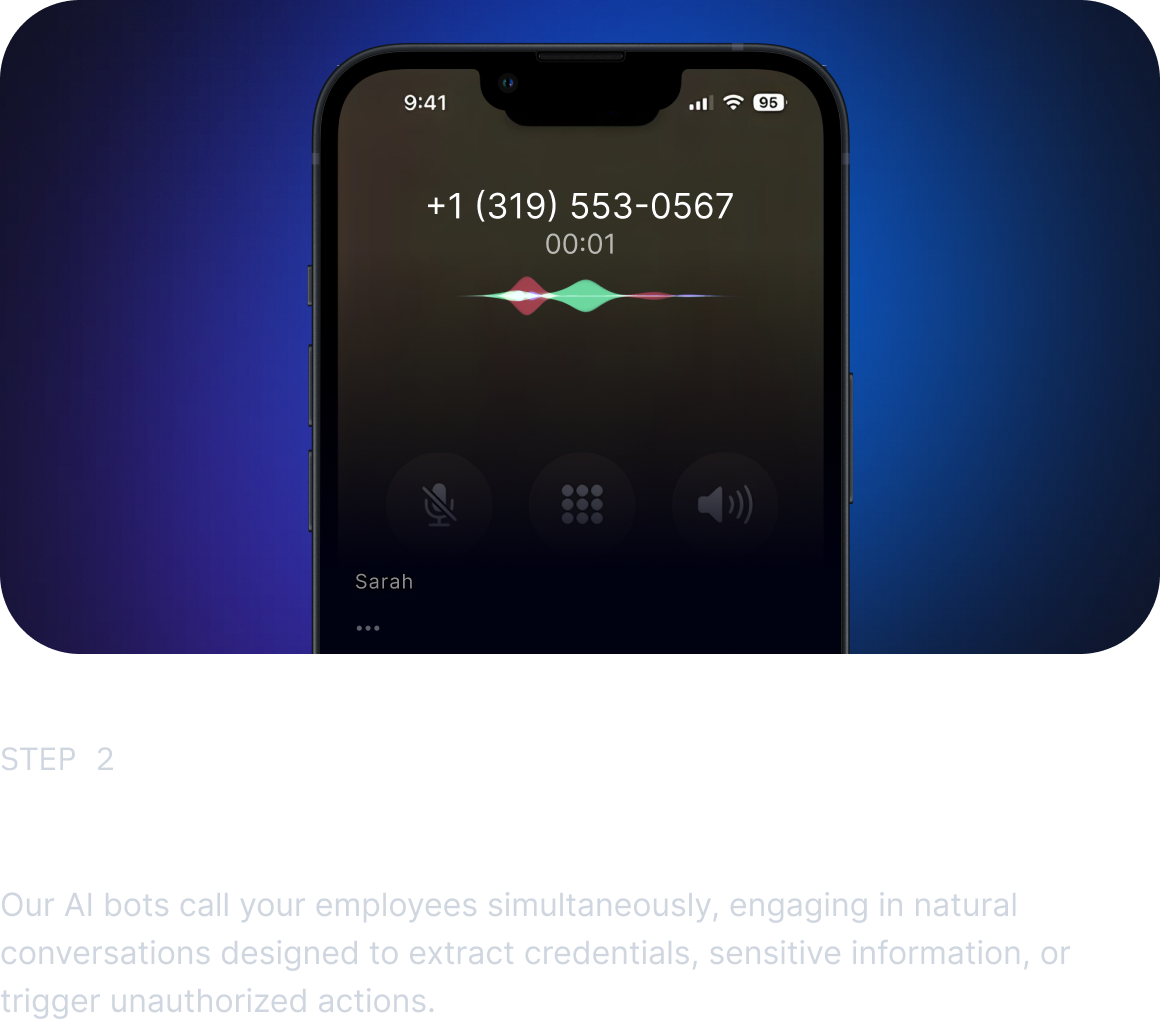

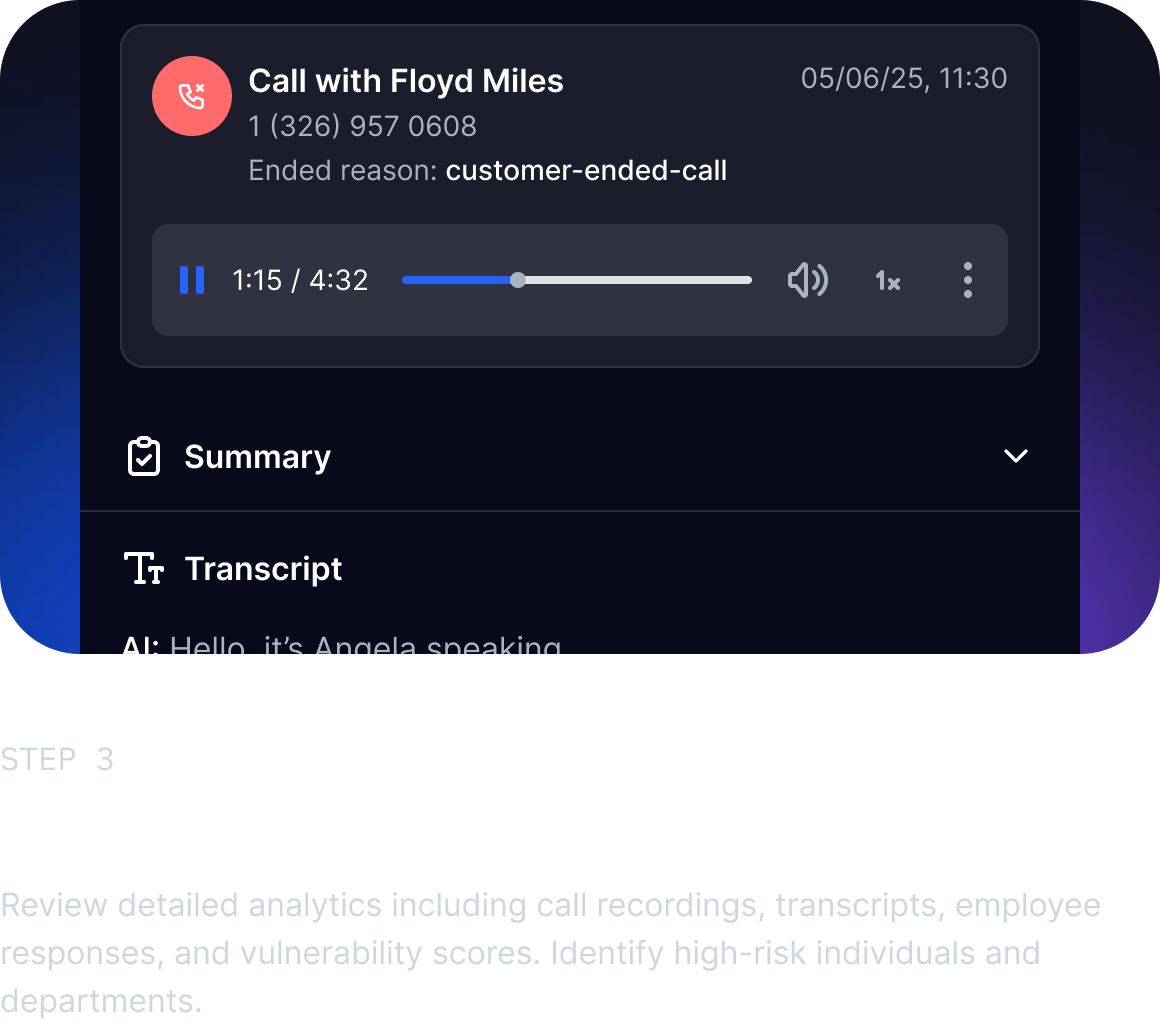

Call execution. Callstrike places the calls automatically at the scheduled time. The AI bot follows the scenario script, listens to the employee's responses, and adapts its approach in real time. If the employee pushes back, the bot applies escalation pressure. If the employee asks for verification, the bot attempts to deflect. The entire conversation is recorded for later review.

Results and reporting. Each call is scored against defined criteria. Did the employee share a credential? Did they agree to a financial transaction? Did they follow the organisation's verification procedure? Did they report the call as suspicious? The platform generates campaign-level analytics showing department risk scores, individual performance, and trend data over time.

The difference between AI vishing and robocalls

AI vishing is not the same as the automated robocalls that most people receive and ignore. Robocalls use pre-recorded messages that play the same script regardless of what the recipient says. They're easy to identify and easy to hang up on.

AI vishing bots are conversational. They listen, process speech in real time, and generate contextually appropriate responses. They pause naturally. They respond to questions. They adapt their tone based on the employee's reactions. The experience for the person on the receiving end is indistinguishable from a real phone conversation with a real human.

This is why testing with static, pre-recorded messages gives organisations a false sense of security. If your vishing simulation doesn't involve real-time AI conversation, it's not testing for the actual threat.

What to measure and what email metrics miss

Traditional phishing simulation platforms measure click rates. The percentage of employees who clicked a malicious link. This metric doesn't translate to voice attacks.

AI vishing simulations need different metrics. The ones that matter are credential disclosure rate (what percentage of employees shared a password, one-time code, or account number), financial action rate (what percentage approved a transfer or provided banking details), verification compliance rate (what percentage followed the organisation's call-back procedure before acting), and report rate (what percentage flagged the call as suspicious to their manager or security team).

These metrics tell a fundamentally different story than click rates. An employee might never click a phishing link but immediately share credentials when they hear their CEO's voice on the phone asking for them. Testing only one channel leaves the other completely unmeasured.

Building a voice verification procedure that actually works

The most effective defence against AI vishing is not technology. It's process. Employees need a clear, simple procedure for handling any voice request that involves credentials, financial transactions, or sensitive data.

The procedure should be three steps. First, end the call politely. Second, look up the caller's number independently (not from caller ID, not from the email that prompted the call, but from your internal directory). Third, call them back on that verified number and confirm the request.

This works because AI vishing attacks rely on the caller maintaining control of the conversation. The moment the employee hangs up and calls back independently, the attack chain breaks. The real executive will have no idea what the employee is talking about, and the attack is exposed.

Callstrike's simulation platform measures exactly this. It tracks which employees follow the call-back procedure and which ones comply without verification. Over repeated campaigns, security teams can see whether their training and process changes are actually improving behaviour.

The organisations getting this right are the ones treating voice phishing with the same rigour they apply to email phishing. That means regular simulation campaigns, measured outcomes, targeted training for employees who fail, and documented verification procedures that everyone knows. The tools exist to test for this now. The question is whether your security awareness programme includes the channels that attackers are actually using.

Frequently Asked Questions

Q: How much audio does an attacker need to clone a voice?

A: As little as 10 seconds of clear speech is enough for a basic clone. Thirty seconds of audio produces results that are nearly indistinguishable from the real person over a phone line. Publicly available sources like conference talks, podcasts, and earnings calls provide more than enough material for most executives.

Q: Can AI vishing bots handle real-time conversations?

A: Yes. Modern AI vishing bots process speech in real time, generate contextually appropriate responses, and adapt their approach based on the target's reactions. They are not pre-recorded messages. The experience on the receiving end feels like a natural phone conversation.

Q: How is AI vishing simulation different from traditional phishing simulation?

A: Traditional phishing simulation tests email-based attacks using fake links and attachments. AI vishing simulation tests voice-based attacks using cloned voices and real-time AI conversations over the phone. They measure different behaviours and reveal different vulnerabilities. Most organisations test for one but not the other.

Q: What should employees do if they suspect an AI vishing call?

A: End the call, look up the caller's number from your company's internal directory (not from caller ID), and call them back directly to verify the request. This simple call-back procedure breaks the attack chain because the real person will not confirm the fraudulent request.

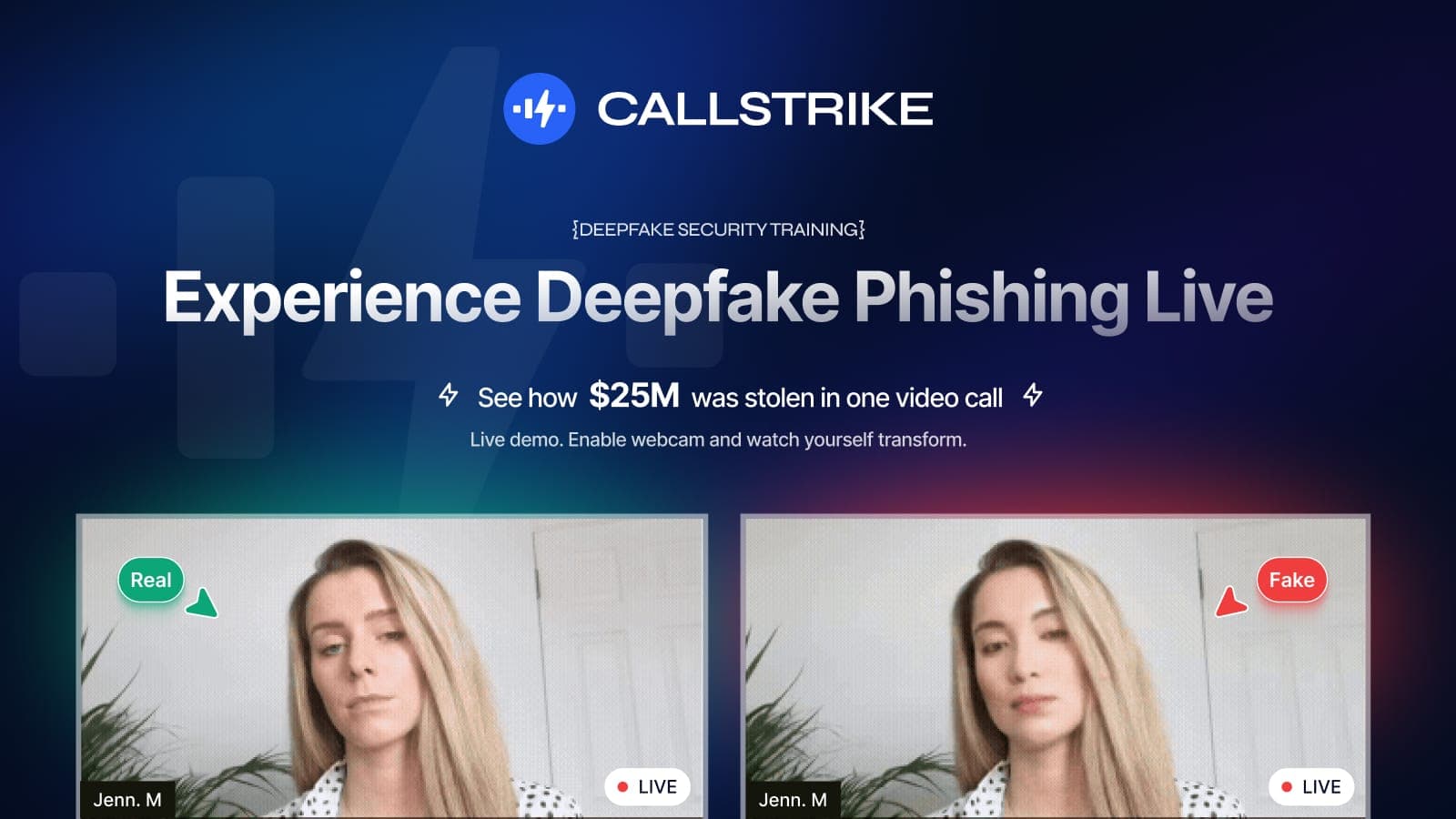

Callstrike simulates AI vishing attacks across your organisation so you can measure who's vulnerable before a real attacker finds out. Try a free deepfake security training demo or see the AI vishing simulation platform.

Share this article

Found it interesting? Don't hesitate to share it to your friends or colleagues