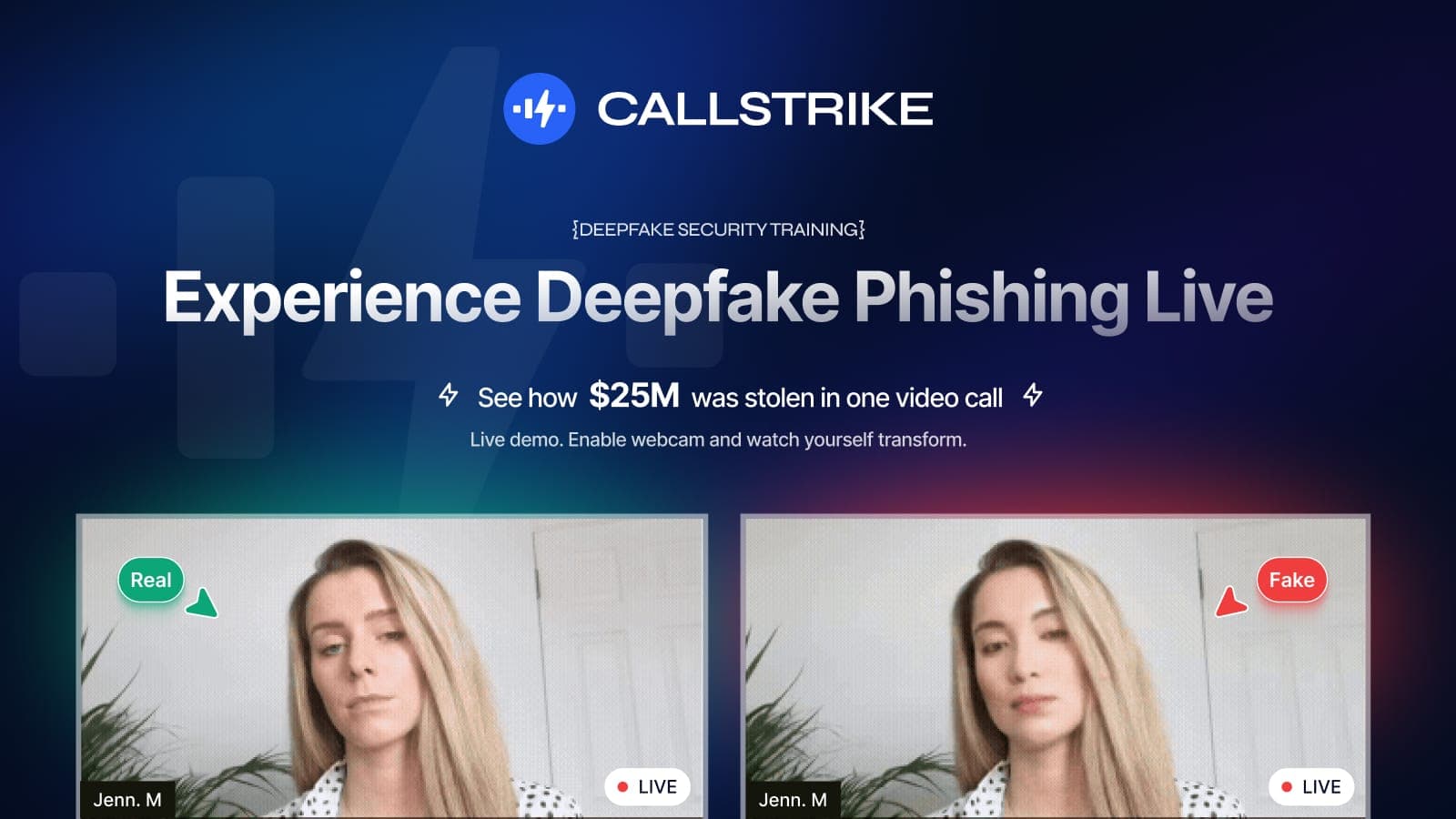

9 Out of 10 Employees Can't Spot a Deepfake Video Call. Can You?

TL;DR: Callstrike built a free browser-based deepfake security training tool that demonstrates real-time face-swapping in a simulated video call. Over 90% of security professionals who try it cannot distinguish the real feed from the synthetic one. No download or account required. Enterprise security teams can use it to demonstrate why deepfake readiness needs to be part of their awareness programme, alongside process-based defences like out-of-band verification for sensitive requests.

In 2024, a finance employee at a multinational firm linked to Arup in Hong Kong received an urgent message from their CFO about a confidential transaction. To verify it, they were invited to a video call with the CFO and several colleagues. Everyone on the call looked and sounded real. Faces, voices, mannerisms. The CFO gave instructions to transfer funds. When the employee hesitated, the other colleagues on the call confirmed it was legitimate.

The employee made 15 transfers to multiple bank accounts. $25 million in total.

Every single person on that call was an AI-generated deepfake. The CFO. The colleagues. All of them.

The technology that made this possible is now freely available. The cost of running it has dropped to near zero. And the people responsible for defending against it, your security team, have almost certainly never tested whether your employees can spot it.

I know this because we built a tool that lets anyone experience a deepfake for themselves. In a browser. In 10 seconds. No downloads, no sign-up.

Thousands of security professionals have tried it. The results are consistent. Over 90% cannot reliably distinguish the real feed from the synthetic one.

Try the free deepfake security training demo

This article explains why that number matters, what attackers are actually doing with this technology right now, and how you can test your own ability to detect it.

The gap in your security training

Every enterprise runs phishing simulations. You send fake emails, track who clicks, and train the ones who fail. This model has been the backbone of security awareness programmes for over a decade.

But it only trains people against one attack vector. Email.

Attackers have moved on. The most sophisticated social engineering attacks in 2025 and 2026 don't come through email at all. They come through:

- Phone calls where the voice on the other end is a cloned version of someone the target trusts

- Video conferences where the person on screen is wearing someone else's face in real time

- WhatsApp messages with voice notes that sound exactly like the CEO

- Callback schemes where a legitimate-looking email prompts the target to call a number staffed by an AI bot

None of these are covered by traditional phishing simulation platforms. Not KnowBe4. Not Proofpoint. Not Hoxhunt. Their platforms were built for email. The threat has moved to voice, video, and messaging.

What attackers are doing right now

This is not theoretical. Here is what is happening in production, in the wild, right now.

Voice cloning from public audio. An attacker finds a 30-second clip of your CFO speaking at a conference. From that clip, they generate a voice model that can say anything in real time. They call your finance team pretending to be the CFO and request an urgent wire transfer. The voice is indistinguishable.

Deepfake video conference infiltration. An attacker joins a Zoom, Teams, or Google Meet call using face-swap technology that runs in a standard browser. No GPUs. No specialised software. They appear on screen as a trusted participant. They ask questions, make requests, and observe sensitive discussions.

WhatsApp voice note impersonation. A target receives a WhatsApp voice message from what sounds like their manager. The message asks them to share a document, approve a payment, or provide login credentials. The voice is synthetic but the recipient has no reason to question it.

AI-powered callback phishing. An employee receives an email about a suspicious charge on their corporate card. The email includes a phone number to call. When they dial, an AI voice bot engages them in a conversation designed to extract credentials, financial information, or remote access.

These attacks work because they exploit trust in channels that employees consider safe. Nobody suspects a video call. Nobody second-guesses a voice note from their boss.

Why 90% of people fail the deepfake test

We built a free interactive tool that demonstrates real-time face-swapping in a simulated video call environment. It shows you two video feeds side by side. One real, one synthetic. Your job is to identify which is which.

The technology works by mapping facial landmarks from a source image onto a live video feed. It captures expressions, head movements, and lighting in real time. The result is a video feed that looks natural to the human eye.

Most people focus on the wrong things when trying to spot deepfakes. They look for obvious glitches, pixelation, or unnatural movement. Modern deepfake technology has largely solved these problems. The artifacts that existed two years ago are gone.

What does give deepfakes away, when anything does at all:

Micro-expression timing. Synthetic faces occasionally lag behind real emotional responses by a fraction of a second. This is nearly impossible to detect in a normal conversation.

Edge blending. Where the face meets the hairline or jawline, there can be subtle inconsistencies in skin tone or texture. On a typical video call with compression artifacts, these are invisible.

Lip sync under pressure. When the source audio and video are both synthetic, lip movements can drift slightly during rapid speech. In a calm, measured conversation, this doesn't happen.

The honest answer is that detection by eye alone is no longer a reliable defence. If your security strategy depends on employees spotting deepfakes visually, it will fail.

What actually works

The solution is not better detection training. It is process-based defence combined with realistic simulation.

Verification protocols. Any request involving money, credentials, or sensitive data should require out-of-band verification. If your CFO calls asking for a wire transfer, your finance team should verify through a separate channel before acting. This works regardless of how convincing the deepfake is.

Simulation and testing. You cannot know how your organisation will respond to a deepfake attack without testing it. Run simulated deepfake video calls against your executive team. Run AI vishing campaigns against your finance department. Run WhatsApp phishing exercises against your senior leadership. Measure the results. Train on the failures.

Channel-specific awareness. Your employees need to understand that video calls, phone calls, and messaging apps are now attack surfaces. The current training that focuses exclusively on email is incomplete. It builds a false sense of security in every other channel.

Technical controls. Implement call verification systems. Use authenticated meeting links. Enable waiting rooms and host controls. These are not foolproof but they raise the bar for attackers.

Try it yourself

We built this tool because seeing is believing. Reading about deepfakes is one thing. Experiencing one is something else entirely.

The Callstrike Deepfake Security Training demo takes 10 seconds. It runs in your browser. No download. No account required. You will see a live face-swap in a simulated video call and you will try to spot which feed is real.

Try the free deepfake security training demo

Then share it with your team. If you run a security awareness programme, this is the simplest way to demonstrate why deepfake readiness needs to be part of it.

The question every CISO should be asking

Your organisation almost certainly runs phishing simulations. You probably have a click-rate dashboard. You might even have a mature security awareness programme with regular training cycles.

But have you ever tested whether your employees can spot a cloned voice on a phone call? Have you tested whether your board would notice a deepfake attendee in a video conference? Have you tested whether your finance team would verify a payment request that came via a WhatsApp voice note from the CEO?

If the answer is no, you are training for the last threat, not the current one.

The attackers have moved on. The question is whether your defences have moved with them.

Callstrike is the world's first enterprise deepfake phishing simulation platform. Book a free deepfake security training demo

Share this article

Found it interesting? Don't hesitate to share it to your friends or colleagues