Why Deepfake Phishing Training Is More Important Than Ever

Deepfake technology has advanced rapidly, making it easier than ever to create highly realistic fake videos, audio clips, and images. While this innovation has positive uses, it has also opened the door to a new and dangerous form of cybercrime: deepfake phishing.

What Is Deepfake Phishing?

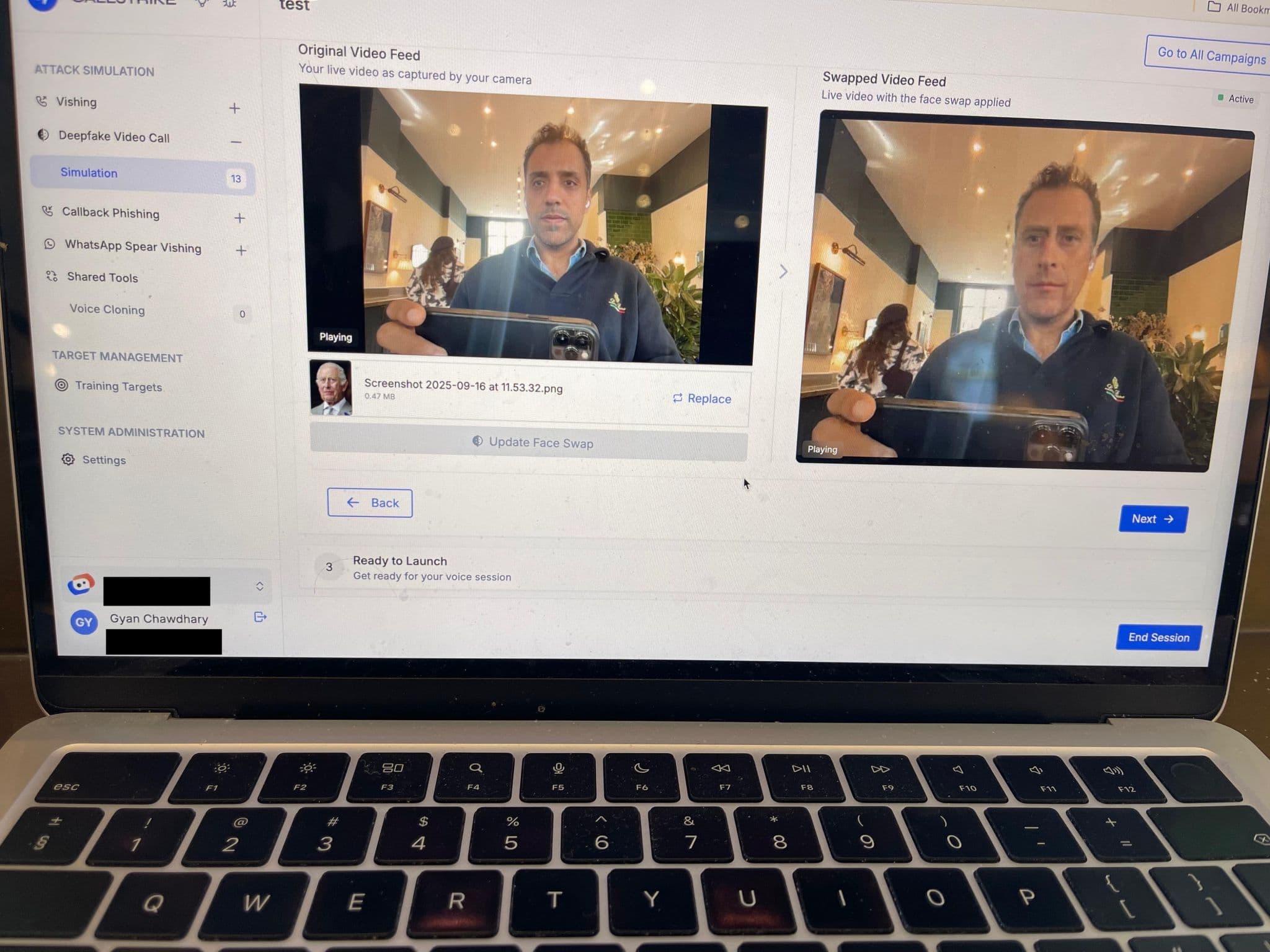

Deepfake phishing uses AI-generated audio or video to impersonate trusted individuals such as executives, managers, or even family members. Unlike traditional phishing emails, these attacks feel personal and convincing—often exploiting voice calls, video messages, or urgent requests.

Why Traditional Security Awareness Isn’t Enough

Many employees are trained to spot suspicious links or poorly written emails. Deepfake attacks, however, bypass these red flags by leveraging familiar voices and faces. When a message looks and sounds authentic, even experienced staff can be caught off guard.

The Real-World Impact

Deepfake phishing has already led to:

- Unauthorized financial transfers

- Data breaches and credential theft

- Loss of trust in internal communications

- Significant financial and reputational damage

As these attacks become more accessible, organizations of all sizes are at risk.

How Deepfake Phishing Training Helps

Targeted training teaches employees to:

- Verify unusual or urgent requests through secondary channels

- Recognize behavioral red flags, not just technical ones

- Understand how AI-generated media can be manipulated

- Respond calmly and report suspicious activity quickly

This proactive approach turns employees into a strong first line of defense.

Conclusion

Deepfake phishing is not a future threat—it’s happening now. Investing in specialized training helps organizations stay ahead of attackers, protect sensitive information, and build a culture of informed skepticism. In a world where seeing and hearing are no longer guarantees of truth, awareness is essential.

Share this article

Found it interesting? Don't hesitate to share it to your friends or colleagues