Deepfake Phishing

How AI-Powered Impersonation Is Redefining Cybercrime

In recent years, phishing attacks have evolved far beyond poorly written emails and obvious scam links. One of the most alarming developments in this space is deepfake phishing—a sophisticated form of social engineering that uses artificial intelligence to convincingly impersonate real people through audio, video, or images. As deepfake technology becomes more accessible and realistic, the risks to individuals and organizations continue to grow.

What Is Deepfake Phishing?

Deepfake phishing is a cyberattack technique where attackers use AI-generated or AI-manipulated media to impersonate trusted individuals such as CEOs, managers, colleagues, or even family members. These deepfakes may take the form of:

- AI-generated voice calls mimicking a known person

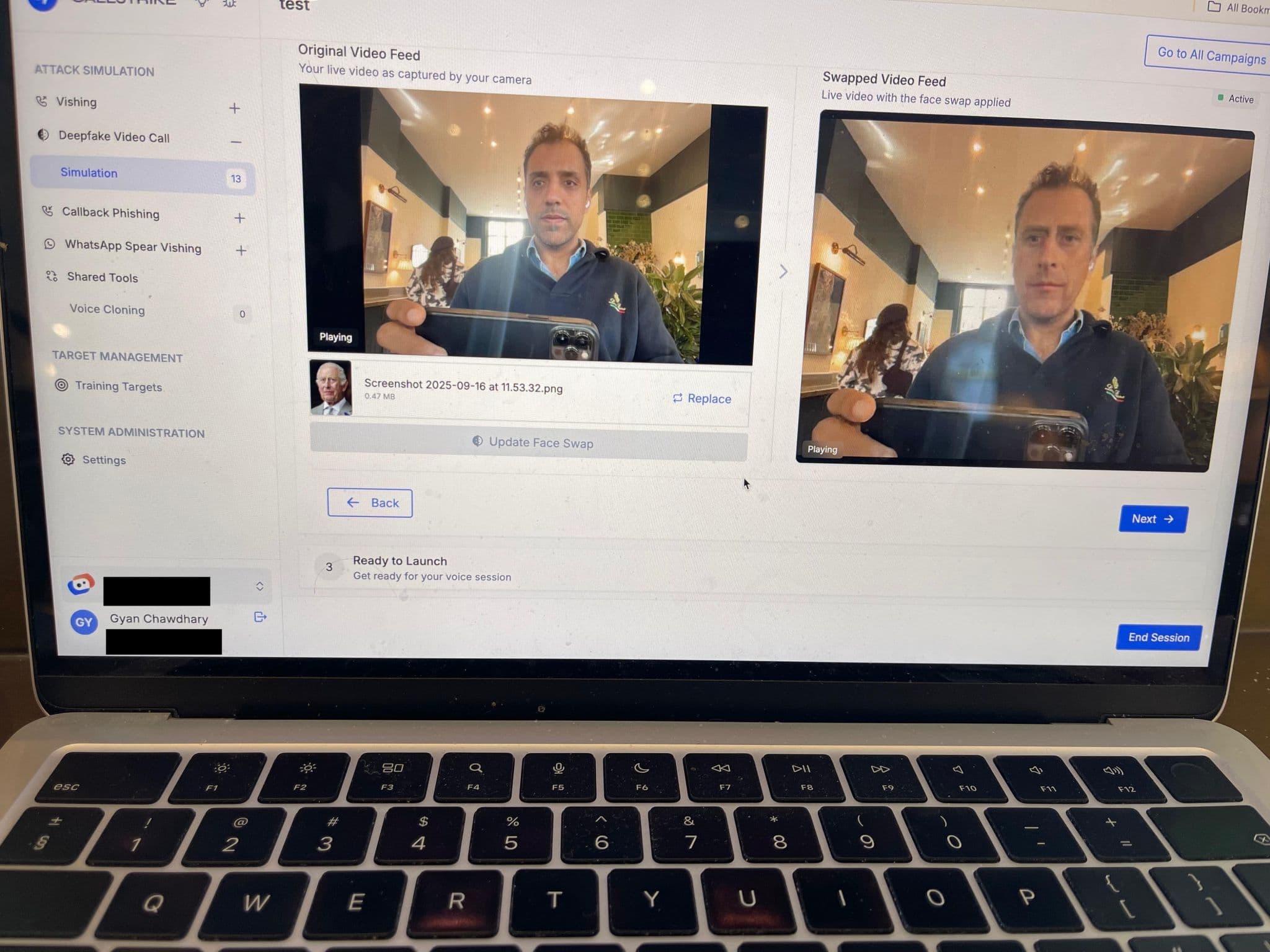

- Manipulated video messages appearing to come from a trusted authority

- Synthetic images or videos used to enhance credibility in scams

The goal is the same as traditional phishing: to trick victims into transferring money, sharing sensitive information, or granting access to systems.

How Deepfake Phishing Attacks Work

A typical deepfake phishing attack follows several steps:

Data Collection

Attackers gather audio, video, or images of the target person from public sources such as social media, company websites, or recorded meetings.Deepfake Creation

Using machine learning models, attackers generate realistic audio or video that mimics the target’s voice, facial expressions, and mannerisms.Social Engineering Execution

The deepfake is used in a phone call, video message, or live meeting to pressure the victim into taking urgent action.Exploitation

Victims are convinced to wire funds, disclose credentials, or bypass normal security procedures.

Real-World Examples

Deepfake phishing is no longer theoretical. There have been documented cases where:

- Employees were tricked into transferring millions of dollars after receiving AI-generated voice calls from “executives.”

- Fraudsters used fake video conferencing appearances to impersonate senior leadership.

- Attackers combined deepfake audio with email phishing to add legitimacy to their scams.

These incidents highlight how convincing and dangerous deepfake-enabled attacks have become.

Why Deepfake Phishing Is So Effective

Deepfake phishing succeeds because it exploits fundamental human traits:

- Trust in familiar voices and faces

- Authority bias, especially when instructions come from executives

- Urgency and fear, which reduce critical thinking

- Remote work environments, where digital communication is the norm

Unlike traditional phishing, deepfake attacks often bypass skepticism because they “look” and “sound” real.

How to Defend Against Deepfake Phishing

While deepfake phishing is difficult to detect, there are effective countermeasures:

1. Verification Protocols

Establish strict verification processes for financial transactions or sensitive requests, such as call-backs or multi-person approvals.

2. Employee Awareness Training

Educate staff about deepfake threats and encourage healthy skepticism—even when requests appear to come from leadership.

3. Multi-Factor Authentication (MFA)

Ensure MFA is required for accessing systems or approving critical actions, reducing the impact of stolen credentials.

4. Technical Detection Tools

Leverage AI-based detection tools that analyze audio and video for signs of manipulation.

5. Limit Public Exposure

Reduce the amount of publicly available audio and video of executives and key personnel where possible.

The Future of Phishing

As AI technology continues to advance, deepfake phishing is likely to become more common and more convincing. Organizations must adapt by combining technology, policy, and human awareness to stay ahead of these threats.

Deepfakes represent a turning point in social engineering—where seeing and hearing is no longer believing. Vigilance, verification, and education are now essential defenses in the modern cybersecurity landscape.

Staying informed is the first step toward staying secure.

Share this article

Found it interesting? Don't hesitate to share it to your friends or colleagues